Communication, punishment and common pool resources

Economic games have been discussed several times on this blog. Their extreme simplicity makes them attractive tools for an experimental approach, but it also makes them all too perfect examples of lack of naturalness and ecological validity. Still many would argue that, together with formal modeling, these games have permitted important theoretical advances and demonstrated for instance that punishment of defectors plays a crucial role in explaining human cooperation. But is it really so? How reliable are the insights gained from simple games such as the ultimatum of the common good games, when in real life, the dilemma people are faced with are much more complex, both in terms of the range of choices available, and the dynamics of interaction over time? Elinor Ostrom, the 2009 Nobel Prize in Economics (which we hailed at the time), is uniquely well placed to understand the complexity of the dilemma that people face when they have to solve real common goods problems, having studied many such dilemmas in real life herself. She has been developing new ways to test experimentally participants’ reactions when faced with dilemma that offer more complex problems than most experiments so far, while maintaining an adequate degree of control. The results from one of these experimental studies have recently been published in an article in Science (328: 613-617): “Lab Experiments for the Study of Social-Ecological Systems” by Marco A. Janssen, Robert Holahan, Allen Lee, Elinor Ostrom. They put in perspective the more standard approaches and strongly suggest rethinking some of their conclusions.

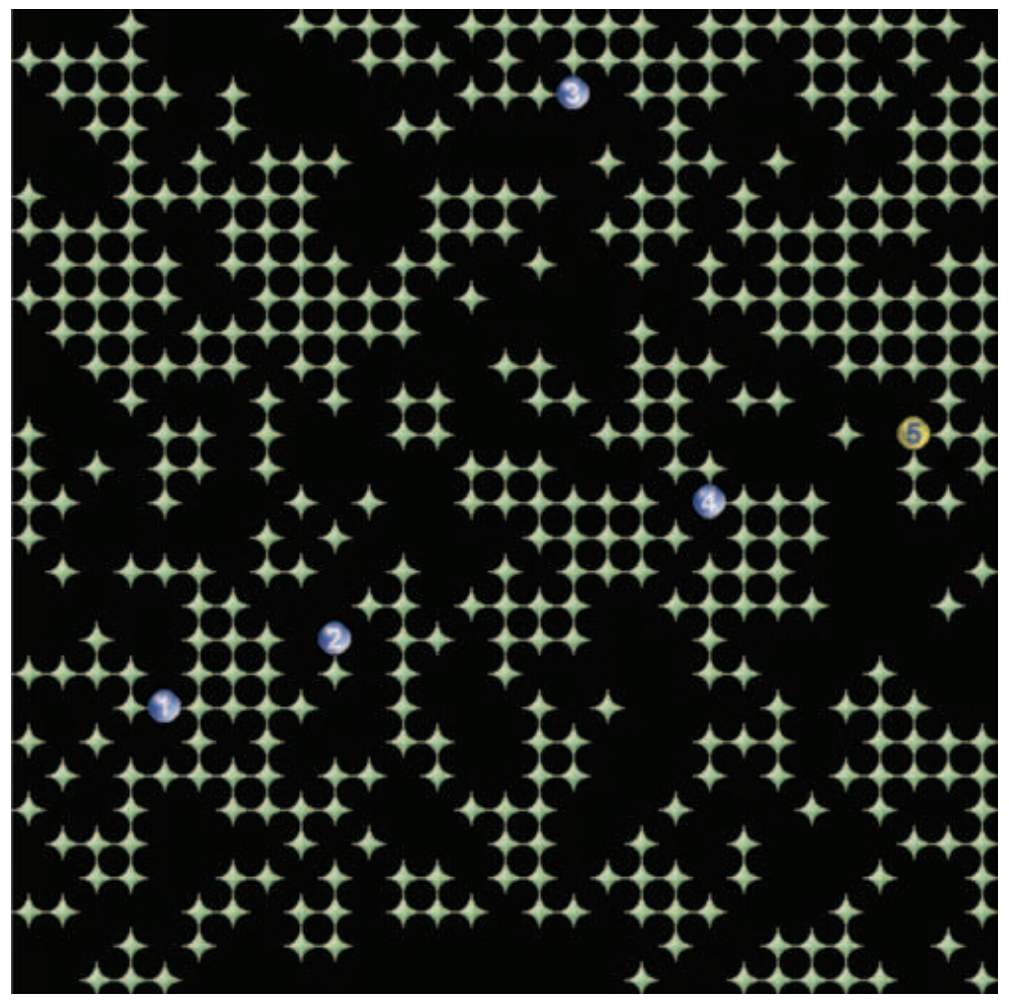

A screen shot of the experimental environment of the study of Janssen et al.

A screen shot of the experimental environment of the study of Janssen et al.

The green star-shaped figures are resource tokens; the circles are avatars of the participants

(yellow is participant’s own avatar; blue represents other participants).

In the game Ostrom and her colleagues developed, participants are faced with a lattice of resources that they can gather by moving an avatar. Several participants share the same lattice, and therefore the same resources. To make the game interesting, the resources can regenerate themselves, but only to the extent that some are left. This provides a simple but more adequate simulation for a common-pool resource—on in which the taking of resources by one individual depletes a pool that is common to all, as is the case with fishing for instance.

To test the effect of costly punishment versus communication, they performed a series of experiments using six different treatments.Each treatment consisted of three consecutive 4-min periods of costly punishment (P), communication (C), or a combination of both (CP) and three consecutive 4-min periods when neither communication nor punishment (NCP) is allowed. All treatments thus consisted of six decision periods, each lasting 4 min. Half the treatments started with NCP and the others finished with NCP. Here’s a summary of the most interesting findings:

In line with many previous results, communication dramatically improved participants’ ability to save resources and, therefore, their overall scores (since they did not deplete the resources too quickly). More interestingly, performance (in terms of ability to exercise self-restraint and maximize overall resource intake) remained at a very good level when participants who had been able to communicate could not communicate anymore, even in the absence of punishment. Contrary to what had been observed in many more standard economic games, punishment alone had no effect. The interpretation offered by the authors is quite interesting. According to them, punishment was ineffective in this setting because punished participants were unable to interpret the punishment and to react accordingly. Finally, punishment had a deleterious effect when coupled with communication. Participants who were given the opportunity to communicate and punish first, before being deprived of both possibilities, stopped cooperating in the second phase. Again, they did not do this when only communication—and not punishment—was available in the first phase. A likely explanation is that if participants saw themselves as having ‘behaved’ in the first phase only under the threat of punishment, and stopped cooperating as soon as the threat was lifted.

These results are especially interesting in light of the many previous results indicating that punishment can be very efficient at sustaining cooperation in repeated ultimatum games or public good games. In a typical public good games, participants can contribute money to a common pool, the common pool is then multiplied by a given amount (say, 2), and divided evenly across all participants. In such a game, everybody would be better off if everybody contributed the full amount of her initial endowment… but each individual can get an even higher payoff by not contributing at all and getting a share of the common pool. When participants play this game repeatedly, cooperation crumbles very quickly, as those participants who give more realize that others give less and adjust their contributions downwards. However, when participants have the ability to punish those who do not contribute enough, a significant level of cooperation can be maintained.

It may seem as if typical public good games have a lot in common with the games participants were playing in the present experiment. A major difference, however, is that in the Janssen and colleagues’ experiment, participants’ behavior is more complex: instead of a single number representing the degree of cooperation, here each participant can take more or less resources, in different places, at different times, etc. Thus, when a participant is punished, it can be hard for her to interpret what she is being punished for exactly. It is then likely that the participant will either get angry at what is perceived as an unfair punishment, or simply fail to understand what should be corrected in her behavior.

This research is important as it is a welcome first step towards designing more ecologically valid experiments. It suggests that this can still be done within the confine of the psychology laboratory. More importantly, it also shows that even such seemingly small modifications as those introduced here can produce dramatically different results. Given that it can easily be argued that most of the situations we encounter are more complex than the one explored in the present experiment, it is conceivable that the effects described here are even stronger in real life – or it could be that what happens in real life is quite different both from what happens in standard economic games and in these somewhat more realisitic ones. In either case, the strong conclusions drawn from previous experiments—regarding the importance of punishment in the evolution of human cooperation for instance—should not be taken as well-established.

1 Comment

You must be logged in to post a comment.

scott howard 2 July 2010 (09:24)

I suspect that the study has a common flaw related to subject selection. Are all the participants college students? What happens to the study if you included the types of people who would turn over the computer if they were not winning? These are the “Take this job and shove it” types; those who might quit a good job in anger even knowing the economic consequences are dire. Cooperation also assumes a future oriented view of life. Test some homeless people who are only present-oriented (take as much as you can now because you never know what will be available later). So while the study may have implications for middle class consumers, it might have limitations for the population at large.